MetaMVUC: Active Learning for Sample-Efficient Sim-to-Real Domain Adaptation in Robotic Grasping

Published in IEEE Robotics and Automation Letters (RA-L), 2025

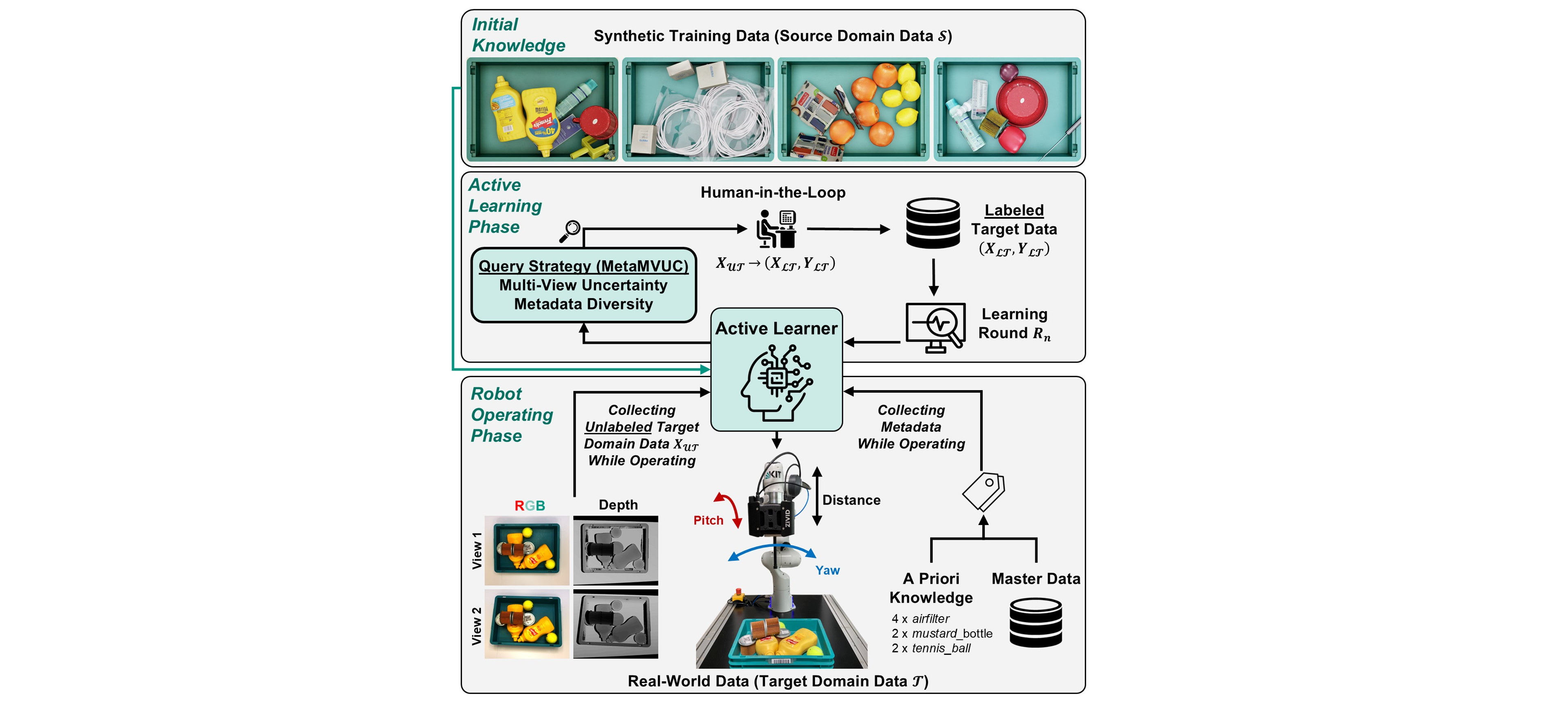

Learning-based robotic grasping systems typically rely on large-scale datasets for training. However, collecting such datasets in the real-world is both costly and time-consuming. Synthetic data generation data is a cost-effective alternative, but models trained solely on synthetic data often struggle with zero-shot real-world performance due to the large domain gap between synthetic and real-world data. To address this challenge of dataset costs against model performance, we propose an active learning framework designed for fast and sample-efficient sim-to-real domain adaptation. Our proposed learning framework uses synthetic data as initial knowledge base and incrementally adapts to the target data domain by selecting the most informative real-world data samples for further model training. For this purpose, we propose a novel, hybrid query strategy, MetaMVUC, which leverages multi-view uncertainty and metadata diversity. MetaMVUC assesses model uncertainty by comparing model predictions across multiple viewpoints, identifying samples with the highest uncertainty. Additionally, since robots in industry or logistics often operate in environments rich in metadata, MetaMVUC leverages this information to ensure diverse and well-distributed sample selection. Experimental results demonstrate the effectiveness of our proposed learning framework. With a limited annotation budget of 16 samples, a robot trained using MetaMVUC achieves a successful grasp rate of 87.7%. Increasing the budget to 40 samples improves grasp performance to 96.7%, outperforming the zero-shot sim-to-real by 17.4% and 26.4%, respectively.

Recommended citation: M. Gilles, K. Furmans and R. Rayyes, "MetaMVUC: Active Learning for Sample-Efficient Sim-to-Real Domain Adaptation in Robotic Grasping," IEEE Robotics and Automation Letters, 10(4), pp.3644-3651, April 2025, doi: 10.1109/LRA.2025.3544083.

Download Paper